“Alexa, launch our nukes!”

- Opinión

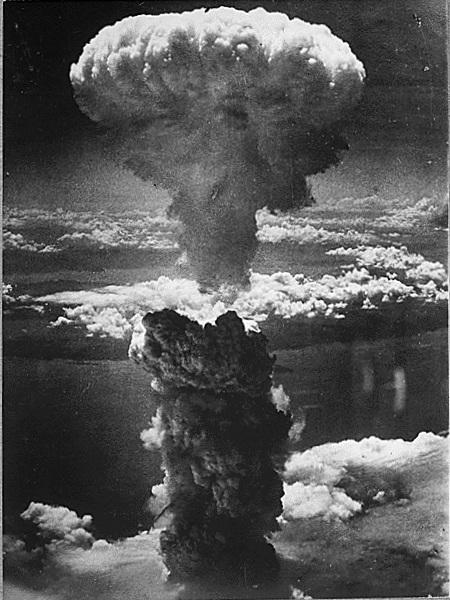

There could be no more consequential decision than launching atomic weapons and possibly triggering a nuclear holocaust. President John F. Kennedy faced just such a moment during the Cuban Missile Crisis of 1962 and, after envisioning the catastrophic outcome of a U.S.-Soviet nuclear exchange, he came to the conclusion that the atomic powers should impose tough barriers on the precipitous use of such weaponry. Among the measures he and other global leaders adopted were guidelines requiring that senior officials, not just military personnel, have a role in any nuclear-launch decision.

That was then, of course, and this is now. And what a now it is! With artificial intelligence, or AI, soon to play an ever-increasing role in military affairs, as in virtually everything else in our lives, the role of humans, even in nuclear decision-making, is likely to be progressively diminished. In fact, in some future AI-saturated world, it could disappear entirely, leaving machines to determine humanity’s fate.

This isn't idle conjecture based on science fiction movies or dystopian novels. It’s all too real, all too here and now, or at least here and soon to be. As the Pentagon and the military commands of the other great powers look to the future, what they see is a highly contested battlefield — some have called it a “hyperwar” environment — where vast swarms of AI-guided robotic weapons will fight each other at speeds far exceeding the ability of human commanders to follow the course of a battle. At such a time, it is thought, commanders might increasingly be forced to rely on ever more intelligent machines to make decisions on what weaponry to employ when and where. At first, this may not extend to nuclear weapons, but as the speed of battle increases and the “firebreak” between them and conventional weaponry shrinks, it may prove impossible to prevent the creeping automatization of even nuclear-launch decision-making.

Such an outcome can only grow more likely as the U.S. military completes a top-to-bottom realignment intended to transform it from a fundamentally small-war, counter-terrorist organization back into one focused on peer-against-peer combat with China and Russia. This shift was mandated by the Department of Defense in its December 2017 National Security Strategy. Rather than focusing mainly on weaponry and tactics aimed at combating poorly armed insurgents in never-ending small-scale conflicts, the American military is now being redesigned to fight increasingly well-equipped Chinese and Russian forces in multi-dimensional (air, sea, land, space, cyberspace) engagements involving multiple attack systems (tanks, planes, missiles, rockets) operating with minimal human oversight.

“The major effect/result of all these capabilities coming together will be an innovation warfare has never seen before: the minimization of human decision-making in the vast majority of processes traditionally required to wage war,” observed retired Marine General John Allen and AI entrepreneur Amir Hussain. “In this coming age of hyperwar, we will see humans providing broad, high-level inputs while machines do the planning, executing, and adapting to the reality of the mission and take on the burden of thousands of individual decisions with no additional input.”

That “minimization of human decision-making” will have profound implications for the future of combat. Ordinarily, national leaders seek to control the pace and direction of battle to ensure the best possible outcome, even if that means halting the fighting to avoid greater losses or prevent humanitarian disaster. Machines, even very smart machines, are unlikely to be capable of assessing the social and political context of combat, so activating them might well lead to situations of uncontrolled escalation.

It may be years, possibly decades, before machines replace humans in critical military decision-making roles, but that time is on the horizon. When it comes to controlling AI-enabled weapons systems, as Secretary of Defense Jim Mattis put it in a recent interview, “For the near future, there’s going to be a significant human element. Maybe for 10 years, maybe for 15. But not for 100.”

Why AI?

Even five years ago, there were few in the military establishment who gave much thought to the role of AI or robotics when it came to major combat operations. Yes, remotely piloted aircraft (RPA), or drones, have been widely used in Africa and the Greater Middle East to hunt down enemy combatants, but those are largely ancillary (and sometimes CIA) operations, intended to relieve pressure on U.S. commandos and allied forces facing scattered bands of violent extremists. In addition, today’s RPAs are still controlled by human operators, even if from remote locations, and make little use, as yet, of AI-powered target-identification and attack systems. In the future, however, such systems are expected to populate much of any battlespace, replacing humans in many or even most combat functions.

To speed this transformation, the Department of Defense is already spending hundreds of millions of dollars on AI-related research. “We cannot expect success fighting tomorrow’s conflicts with yesterday’s thinking, weapons, or equipment,” Mattis told Congress in April. To ensure continued military supremacy, he added, the Pentagon would have to focus more “investment in technological innovation to increase lethality, including research into advanced autonomous systems, artificial intelligence, and hypersonics.”

Why the sudden emphasis on AI and robotics? It begins, of course, with the astonishing progress made by the tech community — much of it based in Silicon Valley, California — in enhancing AI and applying it to a multitude of functions, including image identification and voice recognition. One of those applications, Alexa Voice Services, is the computer system behind Amazon’s smart speaker that not only can use the Internet to do your bidding but interpret your commands. (“Alexa, play classical music.” “Alexa, tell me today’s weather.” “Alexa, turn the lights on.”) Another is the kind of self-driving vehicle technology that is expected to revolutionize transportation.

Artificial Intelligence is an “omni-use” technology, explain analysts at the Congressional Research Service, a non-partisan information agency, “as it has the potential to be integrated into virtually everything.” It’s also a “dual-use” technology in that it can be applied as aptly to military as civilian purposes. Self-driving cars, for instance, rely on specialized algorithms to process data from an array of sensors monitoring traffic conditions and so decide which routes to take, when to change lanes, and so on. The same technology and reconfigured versions of the same algorithms will one day be applied to self-driving tanks set loose on future battlefields. Similarly, someday drone aircraft — without human operators in distant locales — will be capable of scouring a battlefield for designated targets (tanks, radar systems, combatants), determining that something it “sees” is indeed on its target list, and “deciding” to launch a missile at it.

It doesn’t take a particularly nimble brain to realize why Pentagon officials would seek to harness such technology: they think it will give them a significant advantage in future wars. Any full-scale conflict between the U.S. and China or Russia (or both) would, to say the least, be extraordinarily violent, with possibly hundreds of warships and many thousands of aircraft and armored vehicles all focused in densely packed battlespaces. In such an environment, speed in decision-making, deployment, and engagement will undoubtedly prove a critical asset. Given future super-smart, precision-guided weaponry, whoever fires first will have a better chance of success, or even survival, than a slower-firing adversary. Humans can move swiftly in such situations when forced to do so, but future machines will act far more swiftly, while keeping track of more battlefield variables.

As General Paul Selva, vice chairman of the Joint Chiefs of Staff, told Congress in 2017:

It is very compelling when one looks at the capabilities that artificial intelligence can bring to the speed and accuracy of command and control and the capabilities that advanced robotics might bring to a complex battlespace, particularly machine-to-machine interaction in space and cyberspace, where speed is of the essence.

Aside from aiming to exploit AI in the development of its own weaponry, U.S. military officials are intensely aware that their principal adversaries are also pushing ahead in the weaponization of AI and robotics, seeking novel ways to overcome America’s advantages in conventional weaponry. According to the Congressional Research Service, for instance, China is investing heavily in the development of artificial intelligence and its application to military purposes. Though lacking the tech base of either China or the United States, Russia is similarly rushing the development of AI and robotics. Any significant Chinese or Russian lead in such emerging technologies that might threaten this country’s military superiority would be intolerable to the Pentagon.

Not surprisingly then, in the fashion of past arms races (from the pre-World War I development of battleships to Cold War nuclear weaponry), an “arms race in AI” is now underway, with the U.S., China, Russia, and other nations (including Britain, Israel, and South Korea) seeking to gain a critical advantage in the weaponization of artificial intelligence and robotics. Pentagon officials regularly cite Chinese advances in AI when seeking congressional funding for their projects, just as Chinese and Russian military officials undoubtedly cite American ones to fund their own pet projects. In true arms race fashion, this dynamic is already accelerating the pace of development and deployment of AI-empowered systems and ensuring their future prominence in warfare.

Command and control

As this arms race unfolds, artificial intelligence will be applied to every aspect of warfare, from logistics and surveillance to target identification and battle management. Robotic vehicles will accompany troops on the battlefield, carrying supplies and firing on enemy positions; swarms of armed drones will attack enemy tanks, radars, and command centers; unmanned undersea vehicles, or UUVs, will pursue both enemy submarines and surface ships. At the outset of combat, all these instruments of war will undoubtedly be controlled by humans. As the fighting intensifies, however, communications between headquarters and the front lines may well be lost and such systems will, according to military scenarios already being written, be on their own, empowered to take lethal action without further human intervention.

Most of the debate over the application of AI and its future battlefield autonomy has been focused on the morality of empowering fully autonomous weapons — sometimes called “killer robots” — with a capacity to make life-and-death decisions on their own, or on whether the use of such systems would violate the laws of war and international humanitarian law. Such statutes require that war-makers be able to distinguish between combatants and civilians on the battlefield and spare the latter from harm to the greatest extent possible. Advocates of the new technology claim that machines will indeed become smart enough to sort out such distinctions for themselves, while opponents insist that they will never prove capable of making critical distinctions of that sort in the heat of battle and would be unable to show compassion when appropriate. A number of human rights and humanitarian organizations have even launched the Campaign to Stop Killer Robots with the goal of adopting an international ban on the development and deployment of fully autonomous weapons systems.

In the meantime, a perhaps even more consequential debate is emerging in the military realm over the application of AI to command-and-control (C2) systems — that is, to ways senior officers will communicate key orders to their troops. Generals and admirals always seek to maximize the reliability of C2 systems to ensure that their strategic intentions will be fulfilled as thoroughly as possible. In the current era, such systems are deeply reliant on secure radio and satellite communications systems that extend from headquarters to the front lines. However, strategists worry that, in a future hyperwar environment, such systems could be jammed or degraded just as the speed of the fighting begins to exceed the ability of commanders to receive battlefield reports, process the data, and dispatch timely orders. Consider this a functional definition of the infamous fog of war multiplied by artificial intelligence — with defeat a likely outcome. The answer to such a dilemma for many military officials: let the machines take over these systems, too. As a report from the Congressional Research Service puts it, in the future “AI algorithms may provide commanders with viable courses of action based on real-time analysis of the battle-space, which would enable faster adaptation to unfolding events.”

And someday, of course, it’s possible to imagine that the minds behind such decision-making would cease to be human ones. Incoming data from battlefield information systems would instead be channeled to AI processors focused on assessing imminent threats and, given the time constraints involved, executing what they deemed the best options without human instructions.

Pentagon officials deny that any of this is the intent of their AI-related research. They acknowledge, however, that they can at least imagine a future in which other countries delegate decision-making to machines and the U.S. sees no choice but to follow suit, lest it lose the strategic high ground. “We will not delegate lethal authority for a machine to make a decision,” then-Deputy Secretary of Defense Robert Work told Paul Scharre of the Center for a New American Security in a 2016 interview. But he added the usual caveat: in the future, “we might be going up against a competitor that is more willing to delegate authority to machines than we are and as that competition unfolds, we’ll have to make decisions about how to compete.”

The doomsday decision

The assumption in most of these scenarios is that the U.S. and its allies will be engaged in a conventional war with China and/or Russia. Keep in mind, then, that the very nature of such a future AI-driven hyperwar will only increase the risk that conventional conflicts could cross a threshold that’s never been crossed before: an actual nuclear war between two nuclear states. And should that happen, those AI-empowered C2 systems could, sooner or later, find themselves in a position to launch atomic weapons.

Such a danger arises from the convergence of multiple advances in technology: not just AI and robotics, but the development of conventional strike capabilities like hypersonic missiles capable of flying at five or more times the speed of sound, electromagnetic rail guns, and high-energy lasers. Such weaponry, though non-nuclear, when combined with AI surveillance and target-identification systems, could even attack an enemy’s mobile retaliatory weapons and so threaten to eliminate its ability to launch a response to any nuclear attack. Given such a “use 'em or lose 'em” scenario, any power might be inclined not to wait but to launch its nukes at the first sign of possible attack, or even, fearing loss of control in an uncertain, fast-paced engagement, delegate launch authority to its machines. And once that occurred, it could prove almost impossible to prevent further escalation.

The question then arises: Would machines make better decisions than humans in such a situation? They certainly are capable of processing vast amounts of information over brief periods of time and weighing the pros and cons of alternative actions in a thoroughly unemotional manner. But machines also make military mistakes and, above all, they lack the ability to reflect on a situation and conclude: Stop this madness. No battle advantage is worth global human annihilation.

As Paul Scharre put it in Army of None, a new book on AI and warfare, “Humans are not perfect, but they can empathize with their opponents and see the bigger picture. Unlike humans, autonomous weapons would have no ability to understand the consequences of their actions, no ability to step back from the brink of war.”

So maybe we should think twice about giving some future militarized version of Alexa the power to launch a machine-made Armageddon.

- Michael T. Klare writes regularly for TomDispatch (where this article originated). He is the five-college professor emeritus of peace and world security studies at Hampshire College and a senior visiting fellow at the Arms Control Association. His most recent book is The Race for What’s Left. His next book, All Hell Breaking Loose: Climate Change, Global Chaos, and American National Security, will be published in 2019.

Copyright ©2018 Michael T. Klare — used by permission of Agence Global

Del mismo autor

- O futuro da energia renovável depende da China 28/05/2021

- Le casse-tête des relations Chine – Etats-Unis pour Joe Biden 20/01/2021

- Trump’s pernicious military legacy 10/12/2020

- The nuclearization of American diplomacy 15/10/2020

- Robot generals 31/08/2020

- The Pentagon confronts the pandemic 22/07/2020

- The new Cold War with China 15/06/2020

- The beginning of the end for oil? 06/05/2020

- Como o aquecimento global aumenta a probabilidade de uma guerra nuclear 20/01/2020

- The U.S. Military on a planet from hell 16/12/2019

Clasificado en

Guerra y Paz

- Prabir Purkayastha 08/04/2022

- Prabir Purkayastha 08/04/2022

- Adolfo Pérez Esquivel 06/04/2022

- Adolfo Pérez Esquivel 05/04/2022

- Vijay Prashad 04/04/2022